Tag: model

Apple’s new AI model edits photos according to text prompts from users

Google changes Bard’s name to Gemini: unveils new Android app and advanced AI model

Microsoft brings new AI image functionality to Copilot, adds new model Deucalion

Doctor Who star transforms into kidnapped glamour model Chloe Ayling in first look at new BBC thriller

A DOCTOR Who star has transformed into Chloe Ayling for her new BBC thriller on her terrifying kidnapping.

The model and former Celebrity Big Brother star, 26, was snatched by a sex trafficking gang and held captive for six days in a remote Italian farmhouse.

Nadia Parkes, pictured, will play the model for the six-part series[/caption]

Masked men kidnapped her after luring her to Milan with promises of a lucrative photoshoot which turned out to be fake.

BBC bosses are dramatising the horrific ordeal in a brand new BBC Three thriller called Kidnapped.

The broadcaster has revealed Nadia Parkes will play the model for the six-part series.

A first look image shows Nadia, 28, in the role as her character faces the press.

The show will tell Ayling’s story based on her own book about the event.

It will also draw on detailed research, extensive interviews and documented legal proceedings.

Georgia Lester, who is best known for writing Channel 4‘s Skins and smash-hit show Killing Eve, has penned the series.

The Beeb has not yet revealed when the show will air but it’s expected later this year.

Previously speaking about the show, Chloe told the Mirror: “I am excited that BBC Studios are telling my story and that the wider world will get to know the truth about what happened to me and learn of the many details that weren’t brought to light originally.

“Georgia Lester and the team have been incredibly supportive in our conversations, and I couldn’t be happier that they are making this series.”

Keep up to date with the latest news, gossip and scandals on our celebrity live blog

New Apple AI Model Edits Images Based on Natural Language Input

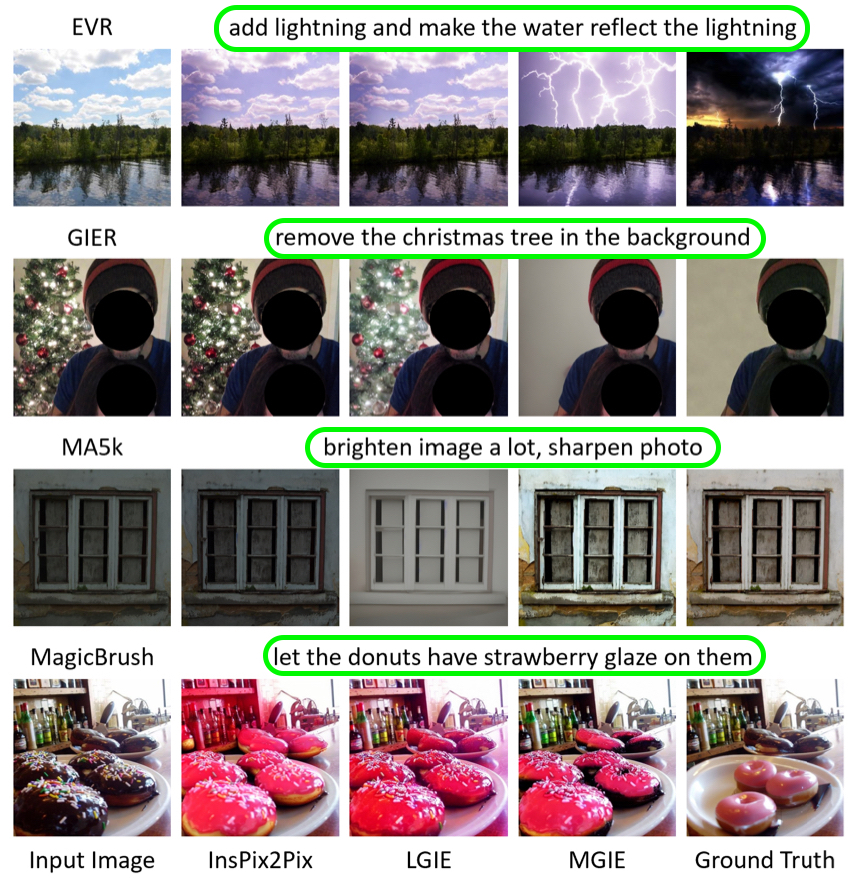

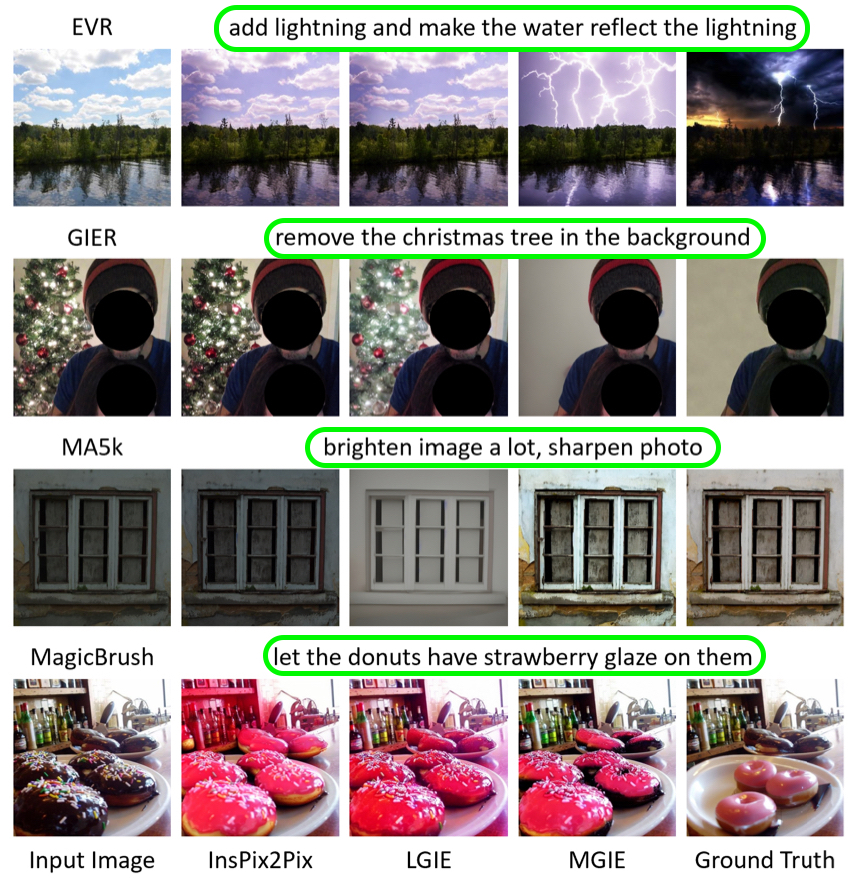

Called “MGIE,” which stands for MLLM-Guided Image Editing, it uses multimodal large language models (MLLMs) to interpret user requests and perform pixel-level manipulations.

The model is capable of editing various aspects of images. Global photo enhancements can include brightness, contrast, or sharpness, or the application of artistic effects like sketching. Local editing can modify the shape, size, color, or texture of specific regions or objects in an image, while Photoshop-style modifications can include cropping, resizing, rotating, and adding filters, or even changing backgrounds and blending images.

A user input for a photo of a pizza could be to “make it look more healthy.” Using common sense reasoning, the model can add vegetable toppings, such as tomatoes and herbs. A global optimization input request might take the form of “add contrast to simulate more light,” while a Photoshop-style modification could be made by asking the model to remove people from the background of a photo, shifting the focus of the image to the subject’s facial expression.

Apple collaborated with University of California researchers to create MGIE, which was presented in a paper at the International Conference on Learning Representations (ICLR) 2024. The model is available on GitHub, and includes the code, data, and pre-trained models.

This is Apple’s second breakthrough in AI research in as many months. In late December, Apple revealed that it had made strides in deploying large language models (LLMs) on iPhones and other Apple devices with limited memory by inventing an innovative flash memory utilization technique.

For the last several months, Apple has been testing an “Apple GPT” rival that could compete with ChatGPT. According to Bloomberg‘s Mark Gurman, work on AI is a priority for Apple, with the company designing an “Ajax” framework for large language models.

Both The Information and analyst Jeff Pu claim that Apple will have some kind of generative AI feature available on the iPhone and iPad around late 2024, which is when iOS 18 will be coming out. iOS 18 is said to include an enhanced version of Siri with ChatGPT-like generative AI functionality, and has the potential to be the “biggest” software update in the iPhone’s history, according to Gurman.

This article, “New Apple AI Model Edits Images Based on Natural Language Input” first appeared on MacRumors.com

Discuss this article in our forums

New Apple AI Model Edits Images Based on Natural Language Input

Called “MGIE,” which stands for MLLM-Guided Image Editing, it uses multimodal large language models (MLLMs) to interpret user requests and perform pixel-level manipulations.

The model is capable of editing various aspects of images. Global photo enhancements can include brightness, contrast, or sharpness, or the application of artistic effects like sketching. Local editing can modify the shape, size, color, or texture of specific regions or objects in an image, while Photoshop-style modifications can include cropping, resizing, rotating, and adding filters, or even changing backgrounds and blending images.

A user input for a photo of a pizza could be to “make it look more healthy.” Using common sense reasoning, the model can add vegetable toppings, such as tomatoes and herbs. A global optimization input request might take the form of “add contrast to simulate more light,” while a Photoshop-style modification could be made by asking the model to remove people from the background of a photo, shifting the focus of the image to the subject’s facial expression.

Apple collaborated with University of California researchers to create MGIE, which was presented in a paper at the International Conference on Learning Representations (ICLR) 2024. The model is available on GitHub, and includes the code, data, and pre-trained models.

This is Apple’s second breakthrough in AI research in as many months. In late December, Apple revealed that it had made strides in deploying large language models (LLMs) on iPhones and other Apple devices with limited memory by inventing an innovative flash memory utilization technique.

For the last several months, Apple has been testing an “Apple GPT” rival that could compete with ChatGPT. According to Bloomberg‘s Mark Gurman, work on AI is a priority for Apple, with the company designing an “Ajax” framework for large language models.

Both The Information and analyst Jeff Pu claim that Apple will have some kind of generative AI feature available on the iPhone and iPad around late 2024, which is when iOS 18 will be coming out. iOS 18 is said to include an enhanced version of Siri with ChatGPT-like generative AI functionality, and has the potential to be the “biggest” software update in the iPhone’s history, according to Gurman.

This article, “New Apple AI Model Edits Images Based on Natural Language Input” first appeared on MacRumors.com

Discuss this article in our forums

This AMD RX 7900 XT graphics card is just £699 – and it’s a rare white OC model too

.jpg?width=1920&height=1920&fit=bounds&quality=80&format=jpg&auto=webp)

It’s rare to see an RX 7900 XT graphics card available for under £700 – especially a white overclocked model! – but that’s exactly what you can get from British retailer Quozo at the moment, with code JAN24 knocking £8 off an already low price. The same card is a whopping £824 at Amazon UK right now, making this a much better deal!

This AMD RX 7900 XT graphics card is just £699 – and it’s a rare white OC model too

.jpg?width=1920&height=1920&fit=bounds&quality=80&format=jpg&auto=webp)

It’s rare to see an RX 7900 XT graphics card available for under £700 – especially a white overclocked model! – but that’s exactly what you can get from British retailer Quozo at the moment, with code JAN24 knocking £8 off an already low price. The same card is a whopping £824 at Amazon UK right now, making this a much better deal!

Apple Testing Slim Camera Bump Design for Base Model iPhone 16

Apple’s latest prototype features a vertical camera arrangement with a pill-shaped raised surface, and we’ve created a series of mockups based on internal designs to help readers visualize the change.

The pill-shaped camera bump features two separate camera rings for the Wide and Ultrawide cameras, adopting some of the stylistic cues from earlier prototype designs. A vertical camera arrangement has remained consistent throughout the prototyping process, and Apple has not changed the position of the flash or the camera lenses with this latest update.

Our findings align with schematics recently shared by Majin Bu on social media website X. Bu’s leaked images also feature the same updated design.

The latest camera look that Apple is experimenting with draws inspiration from older iPhone models, such as the iPhone X. The iPhone X also had a pill-shaped camera with a slim bump design. While Apple used a vertical camera for the iPhone 12 as well, it had a wider square bump that also housed the flash and microphone.

With the vertical camera layout, we are expecting Apple to bring Spatial Video recording to the base model iPhone 16 and iPhone 16 Plus models. Right now, the iPhone 15 models have a diagonal camera arrangement and are not able to capture spatial video, a feature that is so far limited to the iPhone 15 Pro models and the Vision Pro headset itself.

Apart from the updated camera bump design, more recent iPhone 16 prototypes include slight modifications to the Action Button and Capture Button, as we reported previously. The latest prototype units feature a smaller Action Button, akin to the one used on iPhone 15 Pro, along with a pressure-sensitive Capture Button that sits flush with the frame of the device. While the camera bump design is largely a cosmetic update affecting the glass backplate, the change to the Action Button during the prototyping phase is more significant. Apple’s updates suggest that it has scrapped its initial idea of bringing a capacitive Action Button to the iPhone 16 range.

Note that what we’ve shared here is sourced from pre-production information, and it may not ultimately reflect the design of the final mass-production units that are released this fall. As we’ve seen with previous iPhone models, Apple creates multiple designs and hardware configurations as part of the development process, and the iPhone 16 range is no exception. More tangible information will come to light as the devices move closer to the EVT (Engineering Validation Testing) phase of development.

For additional details on what to expect, check out our dedicated rumor roundup pages for iPhone 16 and iPhone 16 Pro.

This article, “Apple Testing Slim Camera Bump Design for Base Model iPhone 16” first appeared on MacRumors.com

Discuss this article in our forums