Tag: 3d

Asus Brings Glasses-Free 3D To OLED Laptops

Asus’ Spatial Vision 3D tech is debuting on two laptops in Q2 this year: the ProArt Studiobook 16 3D OLED (H7604) and Vivobook Pro 16 3D OLED (K6604). The laptops each feature a 16-inch, 3200×2000 OLED panel with a 120 Hz refresh rate. The OLED panel is topped with a layer of optical resin, a glass panel, and a lenticular lens layer. The lenticular lens works with a pair of eye-tracking cameras to render real-time images for each eye that adjust with your physical movements. In a press briefing, an Asus spokesperson said that because the OLED screens claim a low gray-to-gray response time of 0.2 ms, as well as the extremely high contrast that comes with OLED, there’s no crosstalk between the left and right eye’s image, ensuring more realistic looking content. However, Asus’ product pages for the laptops acknowledge that experiences may vary, and some may still suffer from “dizziness or crosstalk due to other reasons, and this varies according to the individual.” Asus said it’s looking to offer demos to users, which would be worth trying out before committing to this unique feature.

On top of the lenticular lens is a 2D/3D liquid crystal switching layer, which is topped with a glass front panel with an anti-reflective coating. According to Asus, it’ll be easy to switch from 2D mode to 3D and back again. When the laptops aren’t in 3D mode, their display will appear as a highly specced OLED screen, Asus claimed. The laptops can apply a 3D effect to any game, movie, or content that supports 3D. However, content not designed for 3D display may appear more “stuttery,” per a demo The Verge saw. The laptops are primarily for workers working with and creating 3D models and content, such as designers and architects. The two laptops will ship with Spatial Vision Hub software. It includes a Model Viewer, Player for movies and videos, Photo Viewer for transforming side-by-side photos shot with a 180-degree camera into one stereoscopic 3D image, and Connector, a plug-in that Asus’ product page says is compatible with “various apps and tools, so you can easily view any project in 3D.”

Read more of this story at Slashdot.

HyperX takes personalization mainstream with 3D printed peripheral add-ons

CES 2023: You can now 3D print your skin supplement habit, thanks to Neutrogena

Every year at CES, Neutrogena has rolled out innovative and fun beauty care tech — from 2019’s customizable 3D sheet masks to 2020’s Next Gen Neutrogena Skin360 App. And this year is no different, with the launch of Nourished x Neutrogena Skin360 SkinStacks.

It appears Neutrogena has jumped on the skin supplement trend (collagen, anyone?) that you might recognize from your Instagram feed or an influencer ad. But unlike the typical skin supplements that you mix in with your smoothie or add to your vitamin regime, Neutrogena is making it personal.

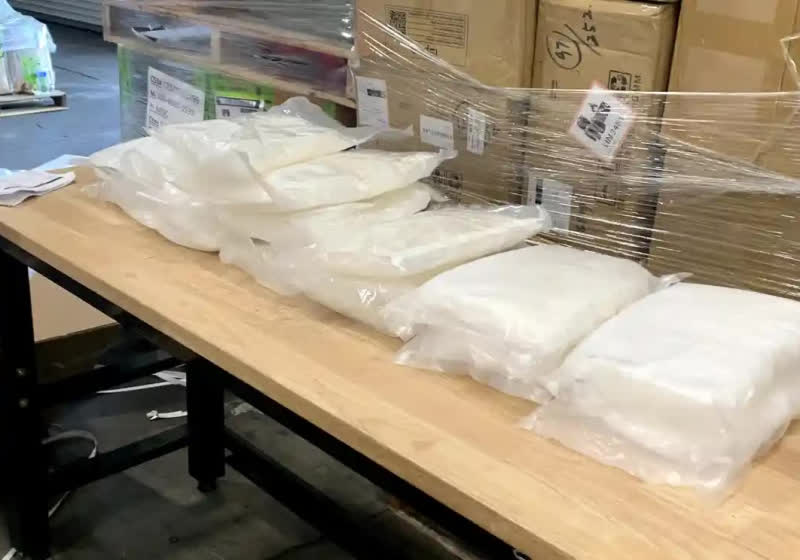

At CES 2023, the skin care giant launched its collaboration with Nourished, a company that produces 3D-printed “super nutrient” gummies. The collaboration uses AI to give users personalized, on-demand, 3D-printed skin supplements called SkinStacks.

“At Neutrogena, we are grounded in the belief that beauty begins with healthy skin and are proud of a heritage that consistently delivers skincare solutions built at the intersection of science and technology, in a way that makes sophisticated science simple and inclusive for our consumers,” Roberto Khoury, senior vice president of Neutrogena, said in a press release. “Working with Nourished allows us to further that commitment by marrying our award-winning digital skin assessment with Nourished elegant 3D printing technology to create on-demand dietary supplements to help consumers meet their personal skincare goals.”

Credit: Courtesy Neutrogena

The innovation builds on Neutrogena’s Skin360 App, which launched at CES 2020. Using the Neutrogena Skin360 assessment tool in the app, people can take photos of their skin after cleaning it, answer a few questions about their skin and skincare goals, and will then be given a recommendation for the most ideal supplement stack for them. After that, Nourished comes in to 3D print their vegan, sugar-free gummy supplies and ship them daily compostable packets.

Beware: A gummy a day might not keep all of your skincare woes away, though. Dermatologist Hadley King told HuffPost that “beauty supplements are basically rebranded multivitamins,” meaning most people get all the nutrients they need from food.

The jury is still out on whether or not Neutrogena’s personalized, on-demand, 3D-printed skin supplements will change your skin — but it sure is a fun addition to beauty tech.

Canceled Duke Nukem 3D remake is the latest Duke project to leak

How USD can become an open 3D standard of the metaverse

OpenAI Releases Point-E, an AI For 3D Modeling

If you were to input a text prompt, say, “A cat eating a burrito,” Point-E will first generate a synthetic view 3D rendering of said burrito-eating cat. It will then run that generated image through a series of diffusion models to create the 3D, RGB point cloud of the initial image — first producing a coarse 1,024-point cloud model, then a finer 4,096-point. “In practice, we assume that the image contains the relevant information from the text, and do not explicitly condition the point clouds on the text,” the research team points out. These diffusion models were each trained on “millions” of 3d models, all converted into a standardized format. “While our method performs worse on this evaluation than state-of-the-art techniques,” the team concedes, “it produces samples in a small fraction of the time.” OpenAI has posted the projects open-source code on Github.

Read more of this story at Slashdot.

Daily Crunch: New Point-E AI allows users to generate 3D objects from detailed text prompts

Hello, friends, and welcome to Daily Crunch, bringing you the most important startup, tech and venture capital news in a single package.

Daily Crunch: New Point-E AI allows users to generate 3D objects from detailed text prompts by Christine Hall originally published on TechCrunch

OpenAI releases Point-E, which is like DALL-E but for 3D modeling

OpenAI, the Elon Musk-founded artificial intelligence startup behind popular DALL-E text-to-image generator, announced on Tuesday the release of its newest picture-making machine POINT-E, which can produce 3D point clouds directly from text prompts. Whereas existing systems like Google’s DreamFusion typically require multiple hours — and GPUs — to generate their images, Point-E only needs one GPU and a minute or two.

3D modeling is used across a variety industries and applications. The CGI effects of modern movie blockbusters, video games, VR and AR, NASA’s moon crater mapping missions, Google’s heritage site preservation projects, and Meta’s vision for the Metaverse all hinge on 3D modeling capabilities. However, creating photorealistic 3D images is still a resource and time consuming process, despite NVIDIA’s work to automate object generation and Epic Game’s RealityCapture mobile app, which allows anyone with an iOS phone to scan real-world objects as 3D images.

Text-to-Image systems like OpenAI’s DALL-E 2 and Craiyon, DeepAI, Prisma Lab’s Lensa, or HuggingFace’s Stable Diffusion, have rapidly gained popularity, notoriety and infamy in recent years. Text-to-3D is an offshoot of that research. Point-E, unlike similar systems, “leverages a large corpus of (text, image) pairs, allowing it to follow diverse and complex prompts, while our image-to-3D model is trained on a smaller dataset of (image, 3D) pairs,” the OpenAI research team led by Alex Nichol wrote in Point·E: A System for Generating 3D Point Clouds from Complex Prompts, published last week. “To produce a 3D object from a text prompt, we first sample an image using the text-to-image model, and then sample a 3D object conditioned on the sampled image. Both of these steps can be performed in a number of seconds, and do not require expensive optimization procedures.”

If you were to input a text prompt, say, “A cat eating a burrito,” Point-E will first generate a synthetic view 3D rendering of said burrito-eating cat. It will then run that generated image through a series of diffusion models to create the 3D, RGB point cloud of the initial image — first producing a coarse 1,024-point cloud model, then a finer 4,096-point. “In practice, we assume that the image contains the relevant information from the text, and do not explicitly condition the point clouds on the text,” the research team points out.

These diffusion models were each trained on “millions” of 3d models, all converted into a standardized format. “While our method performs worse on this evaluation than state-of-the-art techniques,” the team concedes, “it produces samples in a small fraction of the time.” If you’d like to try it out for yourself, OpenAI has posted the projects open-source code on Github.