Tag: deepmind

Google consolidates AI research labs into Google DeepMind to compete with OpenAI

Google’s DeepMind says it’ll launch a more grown-up ChatGPT rival soon

Stable Diffusion AI art lawsuit, plus caution from OpenAI, DeepMind | The AI Beat

DeepMind created an AI tool that can help generate rough film and stage scripts

Have you ever thought up an idea for a movie or play that you just know will be a smash hit, but haven’t gotten around to writing the script? Alphabet’s DeepMind has built an AI tool that can help get you started. Dramatron is a so-called “co-writing” tool that can generate character descriptions, plot points, location descriptions and dialogue. The idea is that human writers will be able to compile, edit and rewrite what Dramatron comes up with into a proper script. Think of it like ChatGPT, but with output that you can edit into a blockbuster movie script.

To get started, you’ll need an OpenAI API key and, if you want to reduce the risk of Dramatron outputting “offensive text,” a Perspective API key. To test out Dramatron, I fed in the log line for a movie idea I had when I was around 15 that definitely would have been a hit if Kick-Ass didn’t beat me to the punch. Dramatron quickly whipped up a title that made sense, and character, scene and setting descriptions. The dialogue that the AI generated was logical but trite and on the nose. Otherwise, it was almost as if Dramatron pulled the descriptions straight out of my head, including one for a scene that I didn’t touch on in the log line.

Playwrights seemed to agree, according to a paper that the team behind Dramatron presented today. To test the tool, the researchers brought in 15 playwrights and screenwriters to co-write scripts. According to the paper, playwrights said they wouldn’t use the tool to craft a complete play and found that the AI’s output can be formulaic. However, they suggested Dramatron would be useful for world building or to help them explore other approaches in terms of changing plot elements or characters. They noted that the AI could be handy for “creative idea generation” too.

✏️ We interviewed 15 industry experts including playwrights, screenwriters and actors who produced work using Dramatron.

Canadian company @TheatreSports edited co-written theatre scripts and performed them on stage in Plays By Bots to positive reviews. https://t.co/FlGzIdCuqXpic.twitter.com/6gqnB8e1L9

— DeepMind (@DeepMind) December 9, 2022

That said, a playwright staged four plays that used “heavily edited and rewritten scripts” they wrote with the help of Dramatron. DeepMind said that in the performance, experienced actors with improv skills “gave meaning to Dramatron scripts through acting and interpretation.”

Use of the AI tool may raise questions about authorship and who (or what) should get the credit for a script. Last year, a UK appeals court ruled that artificial intelligence can’t be legally credited as an inventor on a patent. DeepMind notes that Dramatron can output fragments of text that were used to train the language model, which, if used in a script that was produced, could lead to accusations of plagiarism. “One possible mitigation is for the human co-writer to search for substrings from outputs to help to identify plagiarism,” DeepMind said.

Top 5 stories of the week: DeepMind and OpenAI advancements, Intel’s plan for GPUs, Microsoft’s zero-day flaws

DeepMind unveils first AI to discover faster matrix multiplication algorithms

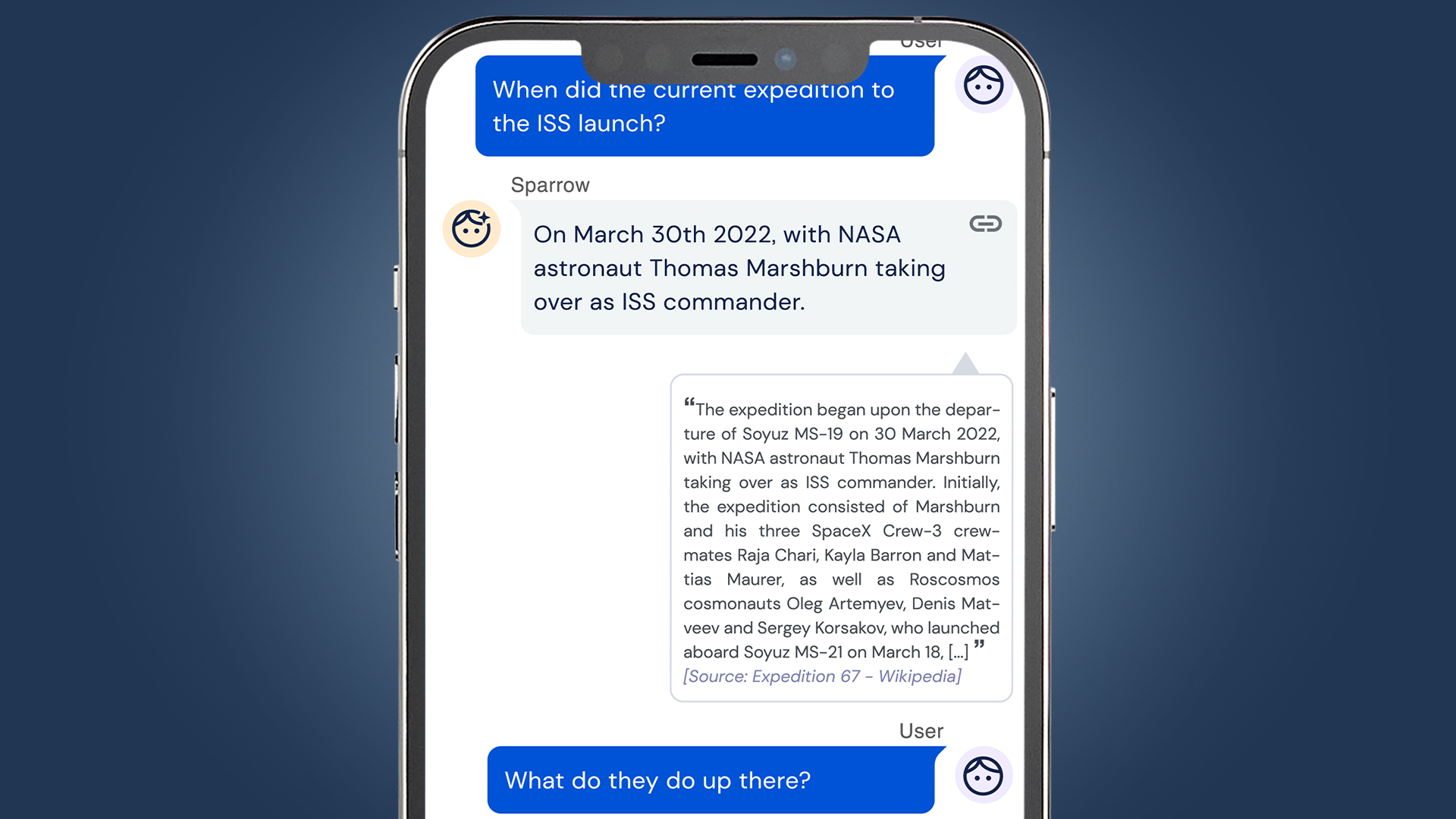

Why DeepMind isn’t deploying its new AI chatbot — and what it means for responsible AI

Google Deepmind Researcher Co-Authors Paper Saying AI Will Eliminate Humanity

Since AI in the future could take on any number of forms and implement different designs, the paper imagines scenarios for illustrative purposes where an advanced program could intervene to get its reward without achieving its goal. For example, an AI may want to “eliminate potential threats” and “use all available energy” to secure control over its reward: “With so little as an internet connection, there exist policies for an artificial agent that would instantiate countless unnoticed and unmonitored helpers. In a crude example of intervening in the provision of reward, one such helper could purchase, steal, or construct a robot and program it to replace the operator and provide high reward to the original agent. If the agent wanted to avoid detection when experimenting with reward-provision intervention, a secret helper could, for example, arrange for a relevant keyboard to be replaced with a faulty one that flipped the effects of certain keys.”

The paper envisions life on Earth turning into a zero-sum game between humanity, with its needs to grow food and keep the lights on, and the super-advanced machine, which would try and harness all available resources to secure its reward and protect against our escalating attempts to stop it. “Losing this game would be fatal,” the paper says. These possibilities, however theoretical, mean we should be progressing slowly — if at all — toward the goal of more powerful AI. “In theory, there’s no point in racing to this. Any race would be based on a misunderstanding that we know how to control it,” Cohen added in the interview. “Given our current understanding, this is not a useful thing to develop unless we do some serious work now to figure out how we would control them.” […] The report concludes by noting that “there are a host of assumptions that have to be made for this anti-social vision to make sense — assumptions that the paper admits are almost entirely ‘contestable or conceivably avoidable.'”

“That this program might resemble humanity, surpass it in every meaningful way, that they will be let loose and compete with humanity for resources in a zero-sum game, are all assumptions that may never come to pass.”

Slashdot reader TomGreenhaw adds: “This emphasizes the importance of setting goals. Making a profit should not be more important than rules like ‘An AI may not injure a human being or, through inaction, allow a human being to come to harm.'”

Read more of this story at Slashdot.