Tag: edits

Apple made an AI image tool that lets you make edits by describing them

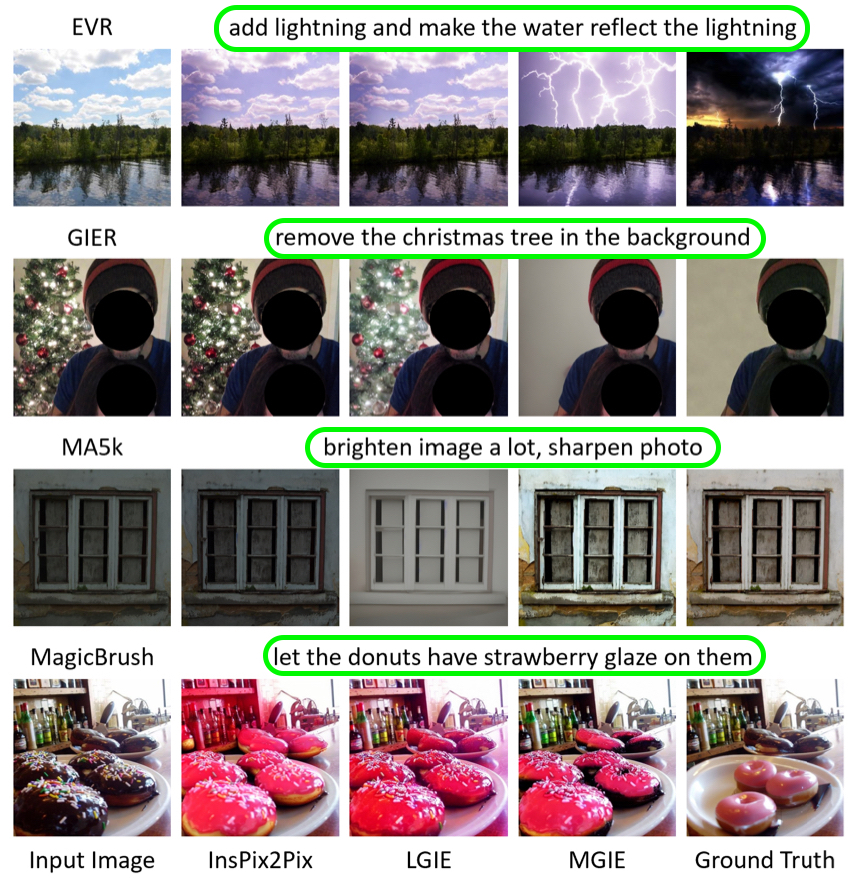

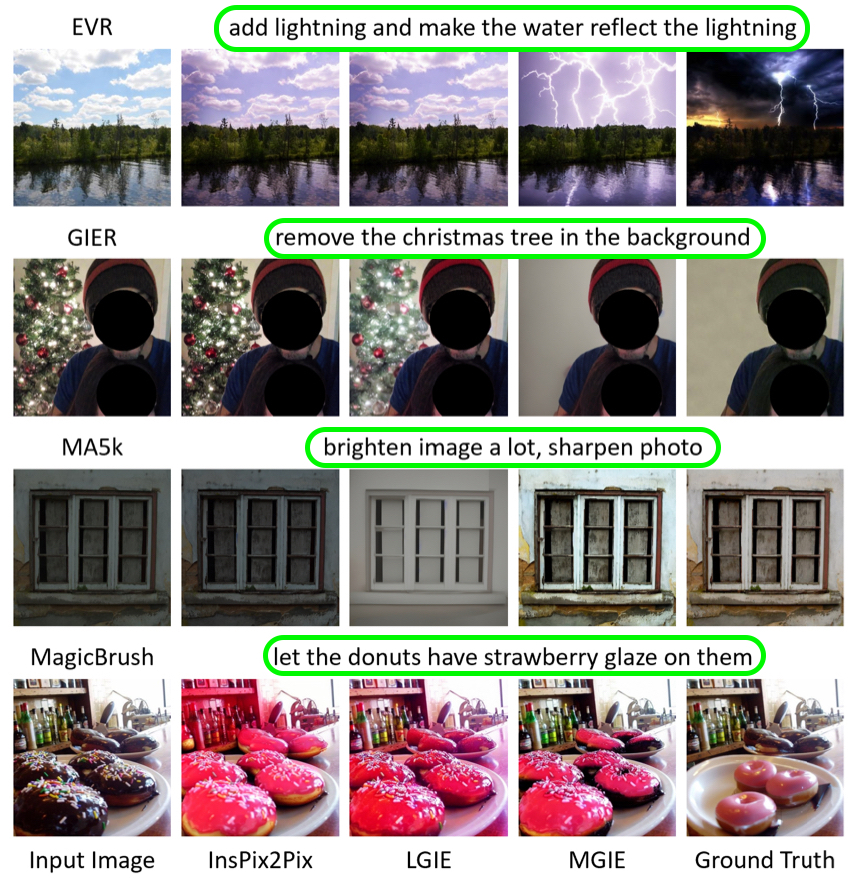

Apple researchers released a new model that lets users describe in plain language what they want to change in a photo without ever touching photo editing software.

The MGIE model, which Apple worked on with the University of California, Santa Barbara, can crop, resize, flip, and add filters to images all through text prompts.

MGIE, which stands for MLLM-Guided Image Editing, can be applied to simple and more complex image editing tasks like modifying specific objects in a photo to make them a different shape or come off brighter. The model blends two different uses of multimodal language models. First, it learns how to interpret user prompts. Then it “imagines” what the edit would look like (asking for a bluer sky in a photo becomes…

Carl Weathers Super Bowl Commercial Will Still Air, Family Consulted On Edits

Carl Weathers isn’t done acting just yet. The popular Mandalorian and Rocky star passed away last week, leaving behind memorable characters and an iconic resume. Weathers recently appeared in teasers for FanDuel’s Super Bowl commercial focused on the outcome of a live field goal attempt by former NFL star Rob Gronkowski. Despite his death, Weathers will still appear in the final commercial, albeit not as originally envisioned.

Variety reports though that FanDuel intends to make some unspecified changes to its commercial after consulting with Weathers’ family. “The family has been very supportive that they would still like to see Carl in the work,” FanDuel’s Andrew Sneyd said.

“We need to change what we are doing in the Bowl. The live event itself carries forward and as is. Rob will kick the field goal and he will be even more inspired to make it. He really enjoyed meeting Carl and found him to be such an optimistic and energetic person,” added Sneyd. Gronkowski’s kick will take place just before gameplay starts, with a follow-up commercial to appear during the Super Bowl. The changes are focused on how Weathers is portrayed in the commercial.

Carl Weathers Super Bowl Commercial Will Still Air, Family Consulted On Edits

Carl Weathers isn’t done acting just yet. The popular Mandalorian and Rocky star passed away last week, leaving behind memorable characters and an iconic resume. Weathers recently appeared in teasers for FanDuel’s Super Bowl commercial focused on the outcome of a live field goal attempt by former NFL star Rob Gronkowski. Despite his death, Weathers will still appear in the final commercial, albeit not as originally envisioned.

Variety reports though that FanDuel intends to make some unspecified changes to its commercial after consulting with Weathers’ family. “The family has been very supportive that they would still like to see Carl in the work,” FanDuel’s Andrew Sneyd said.

“We need to change what we are doing in the Bowl. The live event itself carries forward and as is. Rob will kick the field goal and he will be even more inspired to make it. He really enjoyed meeting Carl and found him to be such an optimistic and energetic person,” added Sneyd. Gronkowski’s kick will take place just before gameplay starts, with a follow-up commercial to appear during the Super Bowl. The changes are focused on how Weathers is portrayed in the commercial.

New Apple AI Model Edits Images Based on Natural Language Input

Called “MGIE,” which stands for MLLM-Guided Image Editing, it uses multimodal large language models (MLLMs) to interpret user requests and perform pixel-level manipulations.

The model is capable of editing various aspects of images. Global photo enhancements can include brightness, contrast, or sharpness, or the application of artistic effects like sketching. Local editing can modify the shape, size, color, or texture of specific regions or objects in an image, while Photoshop-style modifications can include cropping, resizing, rotating, and adding filters, or even changing backgrounds and blending images.

A user input for a photo of a pizza could be to “make it look more healthy.” Using common sense reasoning, the model can add vegetable toppings, such as tomatoes and herbs. A global optimization input request might take the form of “add contrast to simulate more light,” while a Photoshop-style modification could be made by asking the model to remove people from the background of a photo, shifting the focus of the image to the subject’s facial expression.

Apple collaborated with University of California researchers to create MGIE, which was presented in a paper at the International Conference on Learning Representations (ICLR) 2024. The model is available on GitHub, and includes the code, data, and pre-trained models.

This is Apple’s second breakthrough in AI research in as many months. In late December, Apple revealed that it had made strides in deploying large language models (LLMs) on iPhones and other Apple devices with limited memory by inventing an innovative flash memory utilization technique.

For the last several months, Apple has been testing an “Apple GPT” rival that could compete with ChatGPT. According to Bloomberg‘s Mark Gurman, work on AI is a priority for Apple, with the company designing an “Ajax” framework for large language models.

Both The Information and analyst Jeff Pu claim that Apple will have some kind of generative AI feature available on the iPhone and iPad around late 2024, which is when iOS 18 will be coming out. iOS 18 is said to include an enhanced version of Siri with ChatGPT-like generative AI functionality, and has the potential to be the “biggest” software update in the iPhone’s history, according to Gurman.

This article, “New Apple AI Model Edits Images Based on Natural Language Input” first appeared on MacRumors.com

Discuss this article in our forums

New Apple AI Model Edits Images Based on Natural Language Input

Called “MGIE,” which stands for MLLM-Guided Image Editing, it uses multimodal large language models (MLLMs) to interpret user requests and perform pixel-level manipulations.

The model is capable of editing various aspects of images. Global photo enhancements can include brightness, contrast, or sharpness, or the application of artistic effects like sketching. Local editing can modify the shape, size, color, or texture of specific regions or objects in an image, while Photoshop-style modifications can include cropping, resizing, rotating, and adding filters, or even changing backgrounds and blending images.

A user input for a photo of a pizza could be to “make it look more healthy.” Using common sense reasoning, the model can add vegetable toppings, such as tomatoes and herbs. A global optimization input request might take the form of “add contrast to simulate more light,” while a Photoshop-style modification could be made by asking the model to remove people from the background of a photo, shifting the focus of the image to the subject’s facial expression.

Apple collaborated with University of California researchers to create MGIE, which was presented in a paper at the International Conference on Learning Representations (ICLR) 2024. The model is available on GitHub, and includes the code, data, and pre-trained models.

This is Apple’s second breakthrough in AI research in as many months. In late December, Apple revealed that it had made strides in deploying large language models (LLMs) on iPhones and other Apple devices with limited memory by inventing an innovative flash memory utilization technique.

For the last several months, Apple has been testing an “Apple GPT” rival that could compete with ChatGPT. According to Bloomberg‘s Mark Gurman, work on AI is a priority for Apple, with the company designing an “Ajax” framework for large language models.

Both The Information and analyst Jeff Pu claim that Apple will have some kind of generative AI feature available on the iPhone and iPad around late 2024, which is when iOS 18 will be coming out. iOS 18 is said to include an enhanced version of Siri with ChatGPT-like generative AI functionality, and has the potential to be the “biggest” software update in the iPhone’s history, according to Gurman.

This article, “New Apple AI Model Edits Images Based on Natural Language Input” first appeared on MacRumors.com

Discuss this article in our forums

Google Pixel vulnerability allows bad actors to undo Markup screenshot edits and redactions

When Google began rolling out Android’s March security patch earlier this week, the company addressed a “High” severity vulnerability involving the Pixel’s Markup screenshot tool. Over the weekend, Simon Aarons and David Buchanan, the reverse engineers who discovered CVE-2023-21036, shared more information about the security flaw, revealing Pixel users are still at risk of their older images being compromised due to the nature of Google’s oversight.

In short, the “aCropalypse” flaw allowed someone to take a PNG screenshot cropped in Markup and undo at least some of the edits in the image. It’s easy to imagine scenarios where a bad actor could abuse that capability. For instance, if a Pixel owner used Markup to redact an image that included sensitive information about themselves, someone could exploit the flaw to reveal that information. You can find the technical details on Buchanan’s blog.

Introducing acropalypse: a serious privacy vulnerability in the Google Pixel’s inbuilt screenshot editing tool, Markup, enabling partial recovery of the original, unedited image data of a cropped and/or redacted screenshot. Huge thanks to @David3141593 for his help throughout! pic.twitter.com/BXNQomnHbr

— Simon Aarons (@ItsSimonTime) March 17, 2023

According to Buchanan, the flaw has existed for about five years, coinciding with the release of Markup alongside Android 9 Pie in 2018. And therein lies the problem. While March’s security patch will prevent Markup from compromising future images, some screenshots Pixel users may have shared in the past are still at risk.

It’s hard to say how concerned Pixel users should be about the flaw. According to a forthcoming FAQ page Aarons and Buchanan shared with 9to5Google and The Verge, some websites, including Twitter, process images in such a way that someone could not exploit the vulnerability to reverse edit a screenshot or image. Users on other platforms aren’t so lucky. Aarons and Buchanan specifically identify Discord, noting the chat app did not patch out the exploit until its recent January 17th update. At the moment, it’s unclear if images shared on other social media and chat apps were left similarly vulnerable.

Google did not immediately respond to Engadget’s request for comment and more information. The March security update is currently available on the Pixel 4a, 5a, 7 and 7 Pro, meaning Markup can still produce vulnerable images on some Pixel devices. It’s unclear when Google will push the patch to other Pixel devices. If you own a Pixel phone without the patch, avoid using Markup to share sensitive images.

This article originally appeared on Engadget at https://www.engadget.com/google-pixel-vulnerability-allows-bad-actors-to-undo-markup-screenshot-edits-and-redactions-195322267.html?src=rss

Maybe stop with the uncomfortable Pedro Pascal thirst edits

Atomic Heart devs apologise for racist cartoon clip and promise edits

Researchers Say ‘Suspicious’ Edits on Wikipedia Reek of Pro-Russian Propaganda

Wikipedia—the online encyclopedia that helps you learn stuff, waste time, and seem more knowledgable than you really are—is not immune to foreign propaganda, according to new research. A study published Monday exposed a network of shadowy editors, the likes of which have been attempting to sway the narrative about…