Tag: risks

6 major risks of using ChatGPT, according to a new study

Execs see risks of operating without ethical tech standards | Deloitte

Shocking: Congress seemed to actually understand AI’s potential risks during hearing

AI just had its big day on Capitol Hill.

Sam Altman, who is the CEO of ChatGPT’s parent company OpenAI, and a figurehead for the current AI discourse, appeared before the Senate Judiciary Committee‘s subcommittee on privacy, technology, and the law for the first time Tuesday. Members of Congress pressed Altman, as well as IBM chief privacy and trust officer Christina Montgomery, and AI expert and NYU emeritus professor of psychology and neural science Gary Marcus, on numerous aspects of generative AI, regarding potential risks and what regulation in the space could look like.

And the hearing went…surprisingly okay?

I know it’s hard to believe. It’s a weird feeling to even write this, having covered numerous Congressional hearings on tech over the years.

Unlike, say, all the previous Congressional hearings on social media, members of Congress seemed to have a general understanding of what potential risks posed by AI actually look like. Septuagenarians may not get online content moderation, but they certainly understand the concept of job loss due to emerging technology. Section 230 may be a fairly confusing law for non web-savvy lawmakers, but they certainly are familiar with copyright laws when discussing potential concerns regarding AI.

Another breath of fresh air coming out of the AI hearing: It was a fairly bipartisan discussion. Hearings on social media frequently devolve into tit for tat back-and-forth needling between Democrats and Republicans over issues like misinformation and online censorship.

Online discussions around AI may be focusing on “woke” chatbots and whether AI models should be able to utter racial slurs. However, there was none of that here at the hearing. Members of both parties seemed to focus solely on the topic at hand, which according to the title of the hearing was Oversight of A.I.: Rules for Artificial Intelligence. Even Senator John Kennedy’s (R-LA) hypothetical scenario in which AI developers try to destroy the world was maneuvered back on track by the experts who pivoted to discussing datasets and AI training.

Perhaps the biggest tell that things went as well as a Congressional tech hearing could go: There are no memes going viral showcasing how out-of-touch U.S. lawmakers are. The dramatic beginning of the hearing featured Senator Richard Blumenthal (D-CT), the chairman of the committee, playing deepfake audio of himself reading an ChatGPT-generated script (Audio deepfakes use AI models to clone voices). From there, the proceedings remained productive to the end.

Yes, the bar is low when the comparison is to previous tech hearings. And the hearing wasn’t perfect. A major part of the conversation surrounding AI right now is just how dangerous the technology is. Much of this is straight-up hype from the industry, an edgy attempt to market AI’s immense, and profitable, capabilities (Remember how crypto was going to be the future and those not throwing their money into the space were “NGMI” or “not gonna make it?”). Also, lawmakers, as usual, seem dismayingly open to members of the industry they’re looking to regulate designing those very regulations. And, while Altman was in agreement with the need for regulation, we’ve heard the same thing from Silicon Valley types, including some who were in retrospect, likely bad faith actors.

But this hearing showed that there is potential for Congress to avoid the same mistakes it’s made with social media. But, remember, there’s just as much potential, if not more, for them to screw it all up.

Starmer risks complacency by suggesting job all but done for Labour I Adam Boulton

Dow Jones Newswires: Reserve Bank of Australia still jittery about stubborn inflation risks

White House: Big Tech bosses told to protect public from AI risks

Daily Crunch: Due to ‘growing concerns about security risks,’ Samsung bans workers from using generative AI

Hello, friends, and welcome to Daily Crunch, bringing you the most important startup, tech and venture capital news in a single package.

Daily Crunch: Due to ‘growing concerns about security risks,’ Samsung bans workers from using generative AI by Christine Hall originally published on TechCrunch

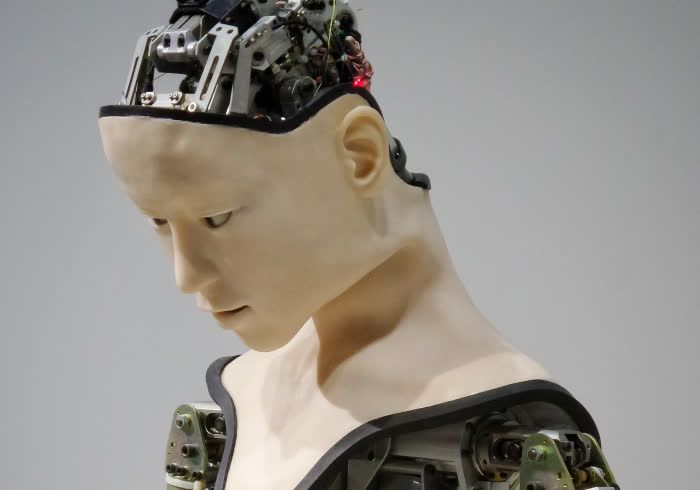

‘Godfather of AI’ has quit Google to warn people of AI risks

Geoffrey Hinton, “the Godfather of AI,” has resigned from Google following the rapid rise of ChatGPT and other chatbots, in order to “freely speak out about the risks of AI,” he told the the New York Times.

Hinton, who helped lay the groundwork for today’s generative AI, was an engineering fellow at Google for over a decade. Per the Times, a part of him regrets his life’s work after seeing the danger generative AI poses. He worries about misinformation; that the average person will “not be able to know what is true anymore.” In near future, he fears that AI’s ability to automate tasks will replace not just just drudge work, but upend the entire job market.

Previously, Hinton thought the AI revolution was decades away. But since OpenAI launched ChatGPT in November 2022, the large language model’s intelligence (LLM) has changed his mind. “Look at how it was five years ago and how it is now,” he said. “Take the difference and propagate it forwards. That’s scary.”

The debut of ChatGPT kicked off a sort of lopsided three way competition against Microsoft Bing, and Google Bard. Lopsided, because GPT-4 which powers ChatGPT also powers Bing. With two contenders coming for its core search business, Google scrambled to launch Bard, despite internal concerns that it wasn’t stress-tested enough for accuracy and safety.

Hinton clarified on Twitter after the Times article was published that he wasn’t criticizing Google specifically, and believes that it has “acted very responsibly.” Instead he is concerned about the broader risks of the warp-speed development of AI, driven by the competitive landscape. Without regulation or transparency, companies risk losing control of a potent technology. “I don’t think they should scale this up more until they have understood whether they can control it,” said Hinton.

That’s yet another expert calling for AI development to hit the pause button.