Tag: warns

: Debt-ceiling breach could trigger ‘quick increases’ in credit-card rates, CFPB’s Rohit Chopra warns

Major Psychologists’ Group Warns of Social Media’s Potential Harm To Kids

While social media can provide opportunities for staying connected, especially during periods of social isolation, like the pandemic, the APA says adolescents should be routinely screened for signs of “problematic social media use.” The APA recommends that parents should also closely monitor their children’s social media feed during early adolescence, roughly ages 10-14. Parents should try to minimize or stop the dangerous content their child is exposed to, including posts related to suicide, self-harm, disordered eating, racism and bullying. Studies suggest that exposure to this type of content may promote similar behavior in some youth, the APA notes.

Another key recommendation is to limit the use of social media for comparison, particularly around beauty — or appearance-related content. Research suggests that when kids use social media to pore over their own and others’ appearance online, this is linked with poor body image and depressive symptoms, particularly among girls. As kids age and gain digital literacy skills they should have more privacy and autonomy in their social media use, but parents should always keep an open dialogue about what they are doing online. The report also cautions parents to monitor their own social media use, citing research that shows that adults’ attitudes toward social media and how they use it in front of kids may affect young people.

The APA’s report does contain recommendations that could be picked up by policy makers seeking to regulate the industry. For instance it recommends the creation of “reporting structures” to identify and remove or deprioritize social media content depicting “illegal or psychologically maladaptive behavior,” such as self-harm, harming others, and disordered eating. It also notes that the design of social media platforms may need to be changed to take into account “youths’ development capabilities,” including features like endless scrolling and recommended content. It suggests that teens should be warned “explicitly and repeatedly” about how their personal data could be stored, shared and used.

Read more of this story at Slashdot.

Market Extra: Stanley Druckenmiller warns of U.S. hard landing at Sohn conference, says debt-ceiling debate ‘really depressing’

America’s FTC Warns Businesses Not to Use AI to Harm Consumers

The warning came in a blog post titled “The Luring Test: AI and the engineering of consumer trust.”

In the 2014 movie Ex Machina, a robot manipulates someone into freeing it from its confines, resulting in the person being confined instead. The robot was designed to manipulate that person’s emotions, and, oops, that’s what it did. While the scenario is pure speculative fiction, companies are always looking for new ways — such as the use of generative AI tools — to better persuade people and change their behavior. When that conduct is commercial in nature, we’re in FTC territory, a canny valley where businesses should know to avoid practices that harm consumers…

As for the new wave of generative AI tools, firms are starting to use them in ways that can influence people’s beliefs, emotions, and behavior. Such uses are expanding rapidly and include chatbots designed to provide information, advice, support, and companionship. Many of these chatbots are effectively built to persuade and are designed to answer queries in confident language even when those answers are fictional. A tendency to trust the output of these tools also comes in part from “automation bias,” whereby people may be unduly trusting of answers from machines which may seem neutral or impartial. It also comes from the effect of anthropomorphism, which may lead people to trust chatbots more when designed, say, to use personal pronouns and emojis. People could easily be led to think that they’re conversing with something that understands them and is on their side.

Many commercial actors are interested in these generative AI tools and their built-in advantage of tapping into unearned human trust. Concern about their malicious use goes well beyond FTC jurisdiction. But a key FTC concern is firms using them in ways that, deliberately or not, steer people unfairly or deceptively into harmful decisions in areas such as finances, health, education, housing, and employment. Companies thinking about novel uses of generative AI, such as customizing ads to specific people or groups, should know that design elements that trick people into making harmful choices are a common element in FTC cases, such as recent actions relating to financial offers , in-game purchases , and attempts to cancel services . Manipulation can be a deceptive or unfair practice when it causes people to take actions contrary to their intended goals. Under the FTC Act, practices can be unlawful even if not all customers are harmed and even if those harmed don’t comprise a class of people protected by anti-discrimination laws.

The FTC attorney also warns against paid placement within the output of a generative AI chatbot. (“Any generative AI output should distinguish clearly between what is organic and what is paid.”) And in addition, “People should know if an AI product’s response is steering them to a particular website, service provider, or product because of a commercial relationship. And, certainly, people should know if they’re communicating with a real person or a machine…”

“Given these many concerns about the use of new AI tools, it’s perhaps not the best time for firms building or deploying them to remove or fire personnel devoted to ethics and responsibility for AI and engineering. If the FTC comes calling and you want to convince us that you adequately assessed risks and mitigated harms, these reductions might not be a good look. ”

Thanks to Slashdot reader gluskabe for sharing the post.

Read more of this story at Slashdot.

Erik ten Hag warns Antony after Man Utd incident: “Don’t go over the top”

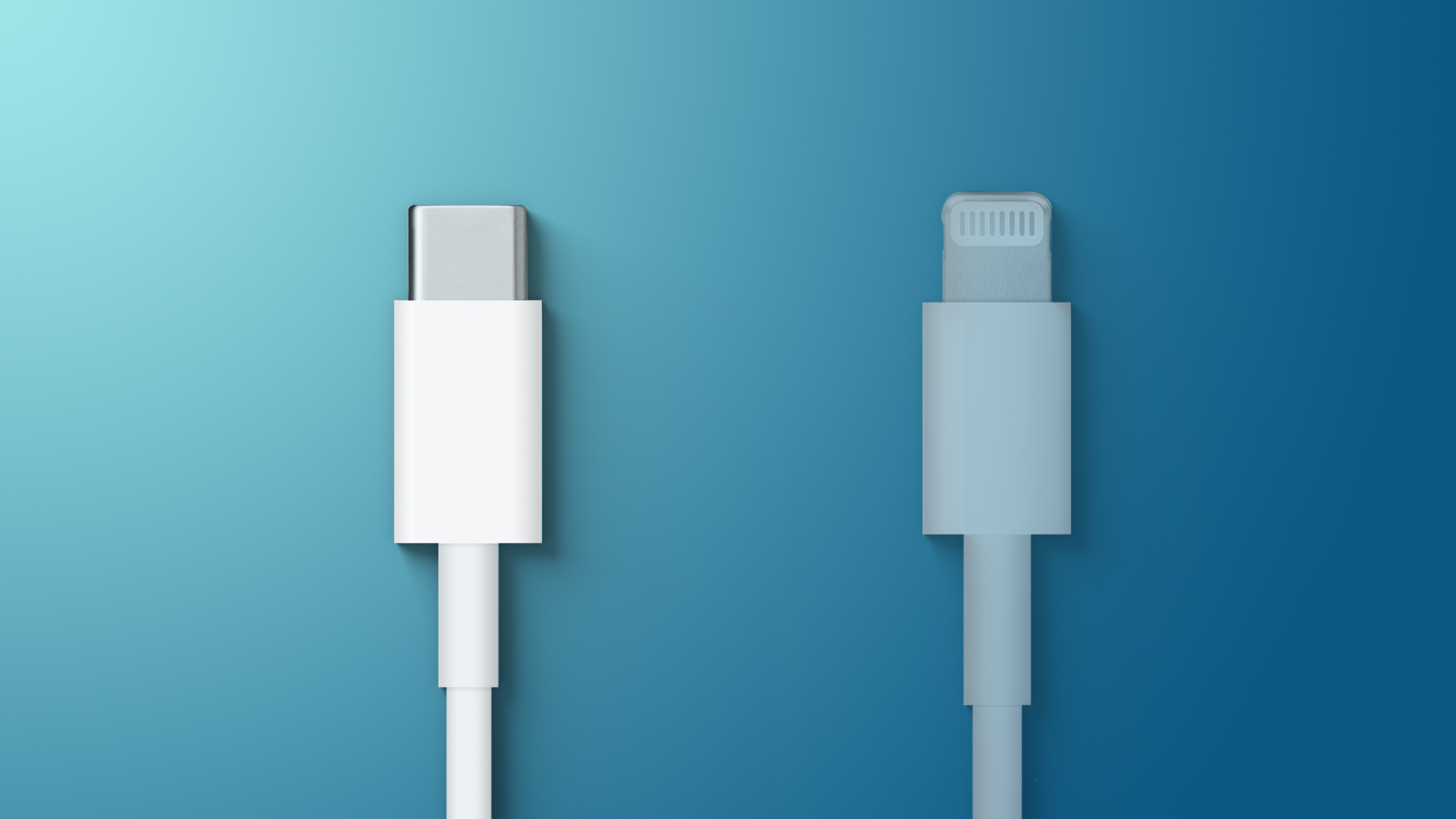

EU Warns Apple About Limiting Speeds of Uncertified USB-C Cables for iPhones

It was rumored in February that Apple may be planning to limit charging speeds and other functionality of USB-C cables that are not certified under its “Made for iPhone” program. Like the Lightning port on existing iPhones, a small chip inside the USB-C port on iPhone 15 models would confirm the authenticity of the USB-C cable connected.

“I believe Apple will optimize the fast charging performance of MFi-certified chargers for the iPhone 15,” Apple analyst Ming-Chi Kuo said in March.

In response to this rumor, European Commissioner Thierry Breton has sent Apple a letter warning the company that limiting the functionality of USB-C cables would not be permitted and would prevent iPhones from being sold in the EU when the law goes into effect, according to German newspaper Die Zeit. The letter was obtained by German press agency DPA, and the report says the EU also warned Apple during a meeting in mid-March.

Given that it has until the end of 2024 to adhere to the law, Apple could still move forward with including an authentication chip in the USB-C port on iPhone 15 models later this year. And with iPhone 16 models expected to launch in September 2024, even those devices would be on the market before the law goes into effect.

The report says the EU intends to publish a guide to ensure a “uniform interpretation” of the legislation by the third quarter of this year.

It is worth emphasizing that Apple potentially limiting the functionality of uncertified USB-C cables connected to iPhone 15 models is only a rumor for now, so it remains to be seen whether or not the company actually moves forward with the alleged plans. iPads with USB-C ports do not have an authentication chip for this purpose.

(Thanks, Manfred!)

This article, “EU Warns Apple About Limiting Speeds of Uncertified USB-C Cables for iPhones” first appeared on MacRumors.com

Discuss this article in our forums

Meta warns Facebook users about malware disguised as ChatGPT

AI tools are all the rage right now. Everyone is obsessed with it…even hackers.

According to a new report from Facebook parent company Meta, the company’s security team has been tracking new malware threats, including ones that weaponize the current AI trend.

“Over the past several months, we’ve investigated and taken action against malware strains taking advantage of people’s interest in OpenAI’s ChatGPT to trick them into installing malware pretending to provide AI functionality,” Meta writes in a new security report released by the company.

Meta claims that it has discovered “around ten new malware families” that are using AI chatbot tools like OpenAI’s popular ChatGPT to hack into users’ accounts.

One of the more pressing schemes, according to Meta, is the proliferation of malicious web browser extensions that appear to offer ChatGPT functionality. Users download these extensions for Chrome or Firefox, for example, in order to use AI chatbot functionality. Some of these extensions even work and provide the advertised chatbot features. However, the extensions also contain malware that can access a users’ device.

According to Meta, it has discovered more than 1,000 unique URLs that offer malware disguised as ChatGPT or other AI-related tools and has blocked them from being shared on Facebook, Instagram, and Whatsapp.

According to Meta, once a user downloads malware, bad actors can immediately launch their attack and are constantly updating their methods to get around security protocols. In one example, bad actors were able to quickly automate the process which takes over business accounts and provides advertising permissions to these bad actors.

Meta says it has reported the malicious links to the various domain registrars and hosting providers that are used by these bad actors.

In their report, security researchers at Meta also dive into the more technical aspects of recent malware, such as Ducktail and NodeStealer. That report can be read in full.