Advanced AI

Will Federated Learning do for ML what Blockchain has done for DeFi?

The growing concerns of data privacy coupled with increasing focus towards decentralized crowdsourced platforms have given rise to the need for on-device machine learning. In this article, we will discuss about Federated Learning, a collaborative machine learning method that operates without exchanging users’ original data.

What is Federated Learning?

Federated learning (FL) is a machine learning method that enables training of shared global models on decentralized local data sets.

FL aims to take advantage of multiple participants that can contribute to a global task individually. The participants can be any computing device that is connected to the internet, generates user interaction data, and has enough processing power.

Why FL now?

In mobile computing, users demand fast responses and the communication time between user device and a central server may be too slow for a good user experience. To overcome this, the model may be placed in the end user device. But then continual learning becomes a challenge since the end user device does not have access to the complete data.

FL overcomes this challenge by enabling continual learning on edge devices while ensuring that end user data does not leave end-user devices. This allows personal data to remain in local sites, reducing possibility of personal data breaches.

FL also facilitates access to more enriched and diverse data even in cases when data sources are not sharing that data, which can improve the model. In addition, because FL models do not need one complex central server to analyze the data, it leads to hardware efficiency.

Few use-cases

Companies like Google use FL techniques in its smartphone keyboard for next word prediction. Apple’s FL techniques leverage user interaction history, which significantly reduce turn-around times when compared to live A/B experimentation. Healthcare and health insurance industry can take advantage of FL, because it allows protecting sensitive data in the original source. In autonomous vehicles, FL can achieve real-time decision-making and continual learning better, and allow models to improve over time with inputs from different vehicles.

How does it work?

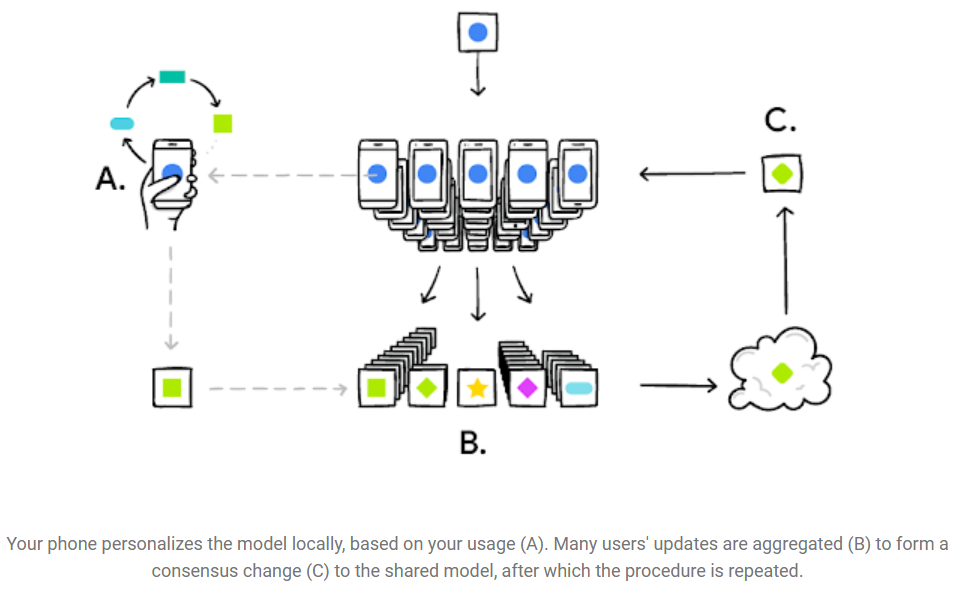

In FL, the data never leaves the user’s devices. Training of ML models occurs locally, using the device’s computing power and data. Only the training meta-information (weights and biases) from the locally trained model is transferred to the central server.

From a pool of candidates, the algorithm chooses a subset of eligible participants based on certain heuristics, so as to minimize the negative impact that local training could have on the user experience. Each participant in the set of eligible devices receives a copy of the global or training model. Then, each device starts a local fine-tuning process of the training model using the local data. After training, the updated parameters from each local model are sent to the central server for a global update. This training metadata is encrypted when moving from client to server, and the server decrypts the aggregated training result only, so that no reverse engineering can reveal information about individual client’s data in this step. Finally, the server aggregates the updates from all clients and starts a new round.

Zero-Sum Masking

This kind of functional encryption is said to be a zero-sum masking protocol. Zero-sum masks sum to 1 in one direction, and 0 in another. One of them combines and secures the encrypted or secure user data, while the next decrypts the training results to the server. This process continues until completed, and then the masks cancel out each other. Here are a few points to note about FL:

- Most ML algorithms assume the data is IID (Independently and Identically Distributed). However, in case of FL, since each participant holds data from its own utilization only, we cannot assume that each portion of data (from each client device) represents the entire population.

- FL is best applied in situations where the on-device data is far more relevant than the data that exists on servers. In today’s hyper-personalized world, that is indeed the case more often than not.

- FL’s distributed testing brings back the benefits of testing the new version of the model where it matters the most, that is, on the users’ devices. Since the server does not have access to the training data, it cannot test the combined model after updating it using the clients’ contributions. For this reason, training and testing occur on users’ devices.

Implementation

FL is already available under tensorflow-federated-nightly.

Below is an illustrative code snippet to build a super-simple FL model for a classification task involving 3 classes.

!pip install --quiet --upgrade tensorflow-federated-nightly

import numpy as np

np.random.seed(0)

import tensorflow as tf

import tensorflow_federated as tff

def create_model(num_classes:int):

model = tf.keras.models.Sequential([

tf.keras.layers.InputLayer(input_shape=(784,)),

tf.keras.layers.Dense(num_classes),

tf.keras.layers.Softmax()])

return tff.learning.from_keras_model(

model,

loss=tf.keras.losses.SparseCategoricalCrossentropy(),

metrics=[tf.keras.metrics.SparseCategoricalAccuracy()])

fl_model = create_model(3)

evaluation = tff.learning.build_federated_evaluation(fl_model)

Challenges

Though FL has tremendous potential, it is not devoid of challenges. FL models require frequent communication between nodes, leading to high network throughput requirements. However, with the recent advances in 5G technology and its more stable and faster internet connections, FL will become easier to deploy.

In addition, device-specific characteristics may limit the generalization of the models from some devices and may reduce accuracy of the next version of the model.

Alternatives: Gossip Learning

In FL, a central model uses the output of other devices to build a new model, which is not really fully decentralized. Researchers propose using Blockchained Federated Learning (BlockFL) and other approaches to build zero-trust models of FL.

Gossip Learning (GL) has been proposed by many researchers to address some of the problems of FL. GL is fully decentralized and there is no server for merging outputs from different locations. Local nodes directly exchange and aggregate models. The advantages of GL are obvious: since no infrastructure is required, and there is no single point of failure, GL enjoys a significantly cheaper scalability and better robustness.

Conclusion

Blockchain technology provided the foundation for cryptocurrency and decentralized applications. Together, these tools have created a fast-growing DeFi (decentralized finance) sector. Just as DeFi could open up new avenues for businesses as this industry grows, Federated Learning can revolutionize the world of machine learning. Like any new technology, FL projects which will be met with different degrees of success initially. Business leaders will need to choose which of these projects will add the most value to their business.

Join Coinmonks Telegram Channel and Youtube Channel learn about crypto trading and investing

Also, Read

- How to trade Futures on FTX Exchange | OKEx vs Binance

- CoinLoan Review | YouHodler Review | BlockFi Review

- ProfitTradingApp for Binance Review | XT.COM Review

- SmithBot Review | 4 Best Free Open Source Trading Bots

- Coinbase Bots | AscendEX Review | OKEx Trading Bots

Of Collaborations, Gossips, and a Masking Trick was originally published in Coinmonks on Medium, where people are continuing the conversation by highlighting and responding to this story.